Introduction

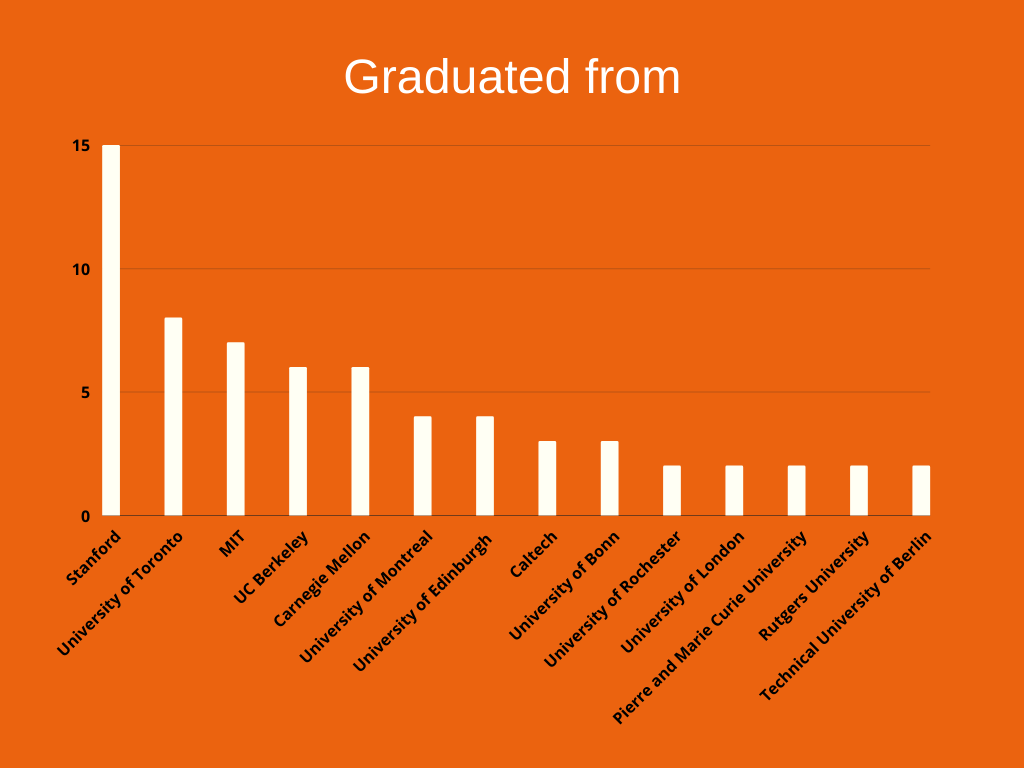

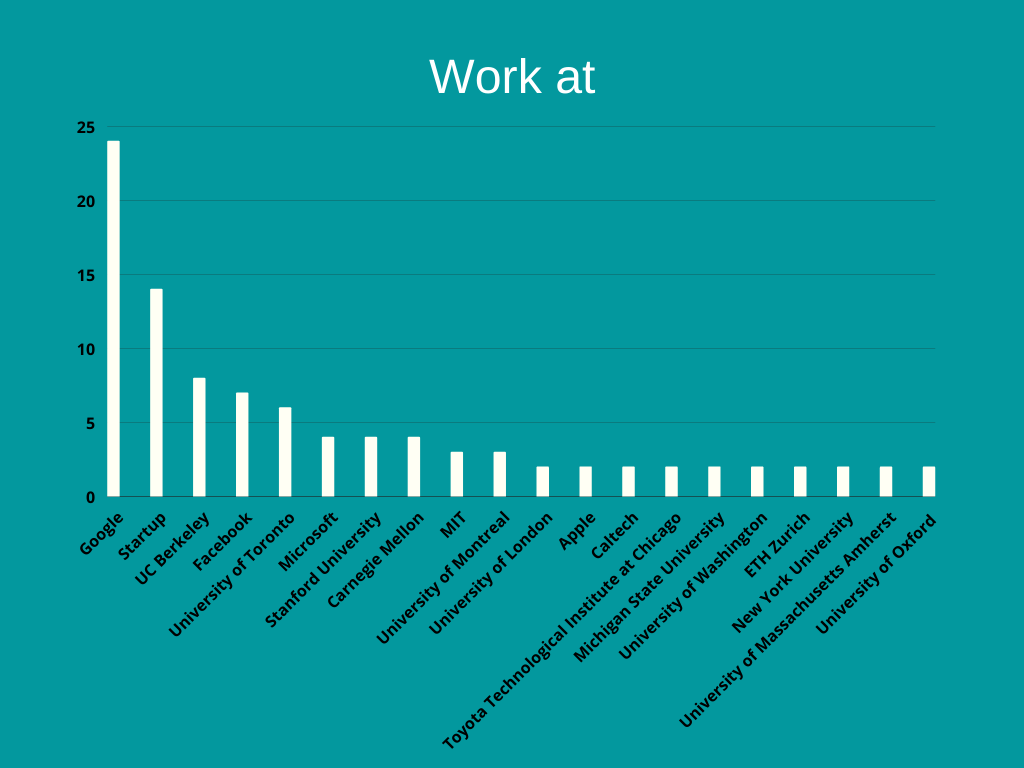

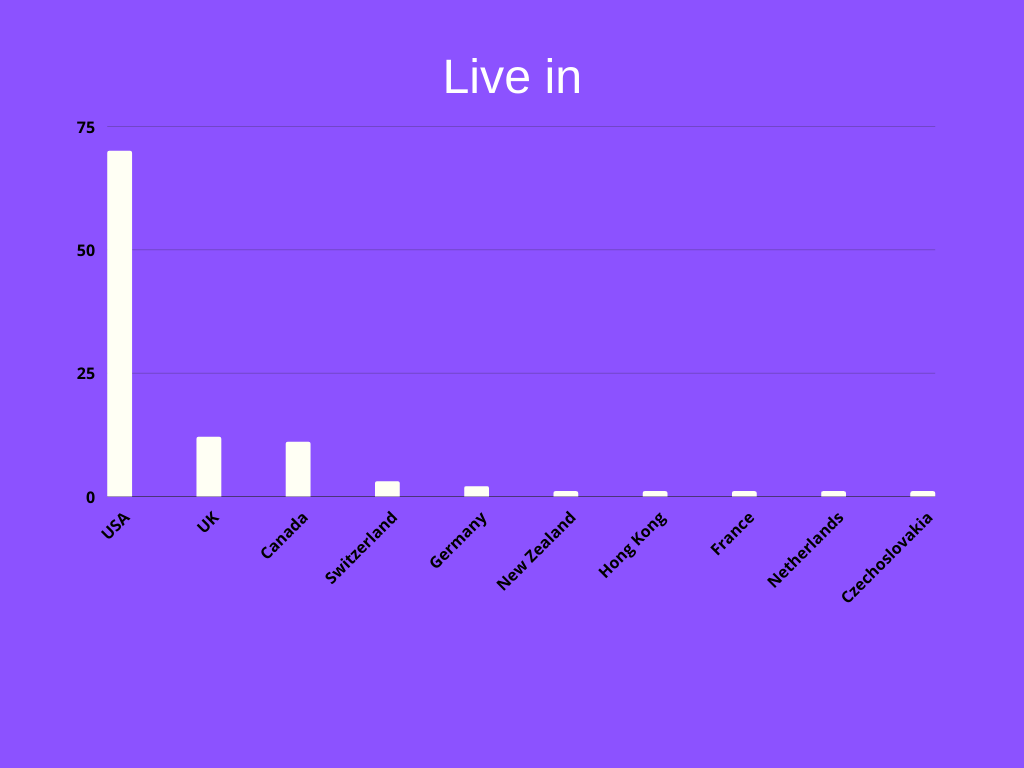

As the field of artificial intelligence (AI) and Machine Learning (ML) grows fast, it is interesting and useful to know who are some of the top experts in the field. This is useful to follow them on social media and know what they are up to, or for graduate students to pick one of them to study as their graduate students, or for industry and companies to get in touch with some of them to get some consultation regarding ideas they have. In order to come up with the list, we have done a lot of web searches and looked at metrics such as the number of citations on Google Scholar or the list of award winners on IEEE. In this post you will see a list of 103 world class experts in the area of machine learning and AI. For each expert we have added a small biography, the place that they currently work as well as the school that they have graduated from. Then based on our findings we looked to see where the most of these experts work and live, and what schools have generated most experts.

List of experts

In this section we show you list of top experts in machine learning and AI. The list is alphabetically ordered.1. Pieter Abbeel: Ph.D. Stanford, h-index: 101, #citations: 45,732

Pieter Abbeel: Professor of Electrical Engineering and Computer Science at UC Berkeley

Pieter Abbeel is a professor of electrical engineering and computer science, Director of the Berkeley Robot Learning Lab, and co-director of the Berkeley AI Research (BAIR) Lab at the University of California, Berkeley. He is also the co-founder of covariant.ai, a venture-funded start-up that aims to teach robots new, complex skills, and co-founder of Gradescope, an online grading system that has been implemented in over 500 universities nationwide. He is best known for his cutting-edge research in robotics and machine learning, particularly in deep reinforcement learning.

2. Yaser Abu-Mostafa: Ph.D. Caltech

Yaser Abu-Mostafa: Professor of Electrical Engineering and Computer Science at the California Institute of Technology

Yaser S. Abu-Mostafa is a Professor of Electrical Engineering and Computer Science at the California Institute of Technology, Chairman of Paraconic Technologies Ltd, and Chairman of Machine Learning Consultants LLC. His main fields of expertise are Machine Learning, Artificial Intelligence, and Computational Finance. He is the author of Amazon’s machine learning bestseller Learning from Data. His MOOC on machine learning has attracted more than seven million views.

3. Jimmy Ba: Ph.D. University of Toronto, , h-index: 26, #citations: 66,184

Jimmy Ba: Assistant Professor in the Department of Computer Science at the University of Toronto

Jimmy Ba is an Assistant Professor in the Department of Computer Science at the University of Toronto. His research focuses on developing novel learning algorithms for neural networks. He is broadly interested in questions related to reinforcement learning, computational cognitive science, artificial intelligence, computational biology, and statistical learning theory. Professor Ba completed his Ph.D. under the supervision of Geoffrey Hinton. He is a Canada CIFAR AI chair.

4 .Peter Bartlett: Ph.D. University of Queensland, Australia, h-index: 69, #citations: 35,140

Peter Bartlett: Professor in the Computer Science Division and Department of Statistics and Associate Director of the Simons Institute for the Theory of Computing at the University of California at Berkeley

Peter Bartlett is a professor in the Computer Science Division and Department of Statistics and Associate Director of the Simons Institute for the Theory of Computing at the University of California at Berkeley. He is the co-author, with Martin Anthony, of the book Neural Network Learning, has edited four other books and has co-authored many papers in the areas of machine learning and statistical learning theory. He has consulted to a number of organizations, including General Electric, Telstra, and SAC Capital Advisors. He was awarded the Malcolm McIntosh Prize for Physical Scientist of the Year in Australia in 2001, and was chosen as an Institute of Mathematical Statistics Medallion Lecturer in 2008 and an IMS Fellow and Australian Laureate Fellow in 2011. He was elected to the Australian Academy of Science in 2015. his publications have been cited 35,062 times.

5. Samy Bengio: Ph.D. University of Montreal, h-index: 79, #citations: 38,734

Samy Bengio has been a research scientist at Google since 2007. He currently leads a group of research scientists in the Google Brain team, conducting research in many areas of machine learning such as deep architectures, representation learning, sequence processing, speech recognition, image understanding, large-scale problems, adversarial settings, etc. He was the general chair for Neural Information Processing Systems (NeurIPS) 2018, was the program chair for NeurIPS in 2017, is an action editor of the Journal of Machine Learning Research and on the editorial board of the Machine Learning Journal, was program chair of the International Conference on Learning Representations (ICLR 2015, 2016), general chair of BayLearn (2012-2015) and the Workshops on Machine Learning for Multimodal Interactions (MLMI'2004-2006), as well as the IEEE Workshop on Neural Networks for Signal Processing (NNSP'2002).

6. Yoshua Bengio: Ph.D. McGill University, h-index: 168, #citations: 319,682

Yoshua Bengio: Professor at the Department of Computer Science and Operations Research at the Université de Montréal and scientific director of the Montreal Institute for Learning Algorithms (MILA)

Yoshua Bengio is a Canadian computer scientist, most noted for his work on artificial neural networks and deep learning. He was a co-recipient of the 2018 ACM A.M. Turing Award for his work in deep learning. He is a professor at the Department of Computer Science and Operations Research at the Université de Montréal and scientific director of the Montreal Institute for Learning Algorithms (MILA). Bengio, together with Geoffrey Hinton and Yann LeCun, are referred to by some as the “Godfathers of AI” and “Godfathers of Deep Learning”.

7. Christopher M. Bishop: Ph.D. University of Edinburgh, h-index: 66, #citations: 114,949

Christopher M. Bishop: is the Laboratory Director at Microsoft Research Cambridge, Professor of Computer Science at the University of Edinburgh, and a Fellow of Darwin College, Cambridge

Christopher Michael Bishop is the Laboratory Director at Microsoft Research Cambridge, Professor of Computer Science at the University of Edinburgh, and a Fellow of Darwin College, Cambridge. Bishop’s research investigates machine learning by allowing computers to learn from data and experience. He was awarded the Tam Dalyell Prize in 2009 and the Rooke Medal from the Royal Academy of Engineering in 2011. He gave the Royal Institution Christmas Lectures in 2008 and the Turing Lecture in 2010. Bishop was elected a Fellow of the Royal Academy of Engineering (FREng) in 2004, a Fellow of the Royal Society of Edinburgh (FRSE) in 2007, and Fellow of the Royal Society (FRS) in 2017. He is well known for his “Pattern Recognition and Machine Learning” book.

8. David Blei: Ph.D. UC Berkeley, h-index: 88, #citations: 87,959

David Blei: is a Professor in the Statistics and Computer Science departments at Columbia University

David M. Blei is a professor in the Statistics and Computer Science departments at Columbia University. Prior to fall 2014, he was an associate professor in the Department of Computer Science at Princeton University. His work is primarily in machine learning. His research interests include topic models and he was one of the original developers of latent Dirichlet allocation, along with Andrew Ng and Michael I. Jordan. As of June 18, 2020, his publications have been cited 83,214 times. Blei received the ACM Infosys Foundation Award in 2013. (This award is given to a computer scientist under the age of 45. It has since been renamed the ACM Prize in Computing.) He was named Fellow of ACM “For contributions to the theory and practice of probabilistic topic modeling and Bayesian machine learning” in 2015.

9. Avrim Blum: Ph.D. MIT, h-index: 73, #citations: 32,712

Avrim Blum: is Professor and Chief Academic Officer at Toyota Technological Institute at Chicago (TTIC)

Avrim Blum is Professor and Chief Academic Officer at Toyota Technological Institute at Chicago (TTIC). The Toyota Technological Institute at Chicago (TTIC or TTI-Chicago) is a PhD-granting computer science institute focusing in the areas of machine learning, algorithms, AI (robotics, natural language, speech, and vision), and computational biology, located on the University of Chicago campus. His main research interests are in machine learning theory, approximation algorithms, on-line algorithms, algorithmic game theory/mechanism design, the theory of database privacy, algorithmic fairness, and non-worst-case analysis of algorithms. Before joining TTIC, he spent 25 years on the CS faculty at Carnegie Mellon University.

10. Leon Bottou: Ph.D. Université Paris-Sud, h-index: 73, #citations: 83,967

Leon Bottou: Researched Lead at Facebook Artificial Intelligence Research

Léon Bottou is a researcher best known for his work in machine learning and data compression. His work presents stochastic gradient descent as a fundamental learning algorithm. He is also one of the main creators of the DjVu image compression technology (together with Yann LeCun and Patrick Haffner), and the maintainer of DjVuLibre, the open-source implementation of DjVu. He is the original developer of the Lush programming language. In 1996, he joined AT&T Labs and worked primarily on the DjVu image compression technology. Between 2002 and 2010, he was a research scientist at NEC Laboratories in Princeton, New Jersey, where he focused on the theory and practice of machine learning with large-scale datasets, on-line learning, and stochastic optimization methods. He developed the open-source software LaSVM for fast large-scale support vector machine and stochastic gradient descent software for training linear SVM and Conditional Random Fields. In 2010 he joined the Microsoft adCenter in Redmond, Washington, and in 2012 became a Principal Researcher at Microsoft Research in New York City. In March 2015 he joined Facebook Artificial Intelligence Research, also in New York City, as a research lead.

11. Matt Botvinick: Ph.D. Carnegie Mellon University, h-index: 78, #citations: 47,968

Matt Botvinick: is Director of Neuroscience Research at DeepMind and Honorary Professor at the Gatsby Computational Neuroscience Unit at University College London

Matthew Botvinick is Director of Neuroscience Research at DeepMind and Honorary Professor at the Gatsby Computational Neuroscience Unit at University College London. Dr. Botvinick completed his undergraduate studies at Stanford University in 1989 and medical studies at Cornell University in 1994, before completing a Ph.D. in psychology and cognitive neuroscience at Carnegie Mellon University in 2001. He served as Assistant Professor of Psychiatry and Psychology at the University of Pennsylvania until 2007 and Professor of Psychology and Neuroscience at Princeton University until joining DeepMind in 2016. Dr. Botvinick’s work at DeepMind straddles the boundaries between cognitive psychology, computational and experimental neuroscience, and artificial intelligence.

12. Jaime Carbonell: Ph.D. Yale, h-index: 75, #citations: 31,260

Jaime Carbonell: was University Professor, Allen Newell Professor, School of Computer Science at Carnegie Mellon University

Jaime Guillermo Carbonell was a computer scientist who made seminal contributions to the development of natural language processing tools and technologies. His extensive research in machine translation resulted in the development of several state-of-the-art language translation and artificial intelligence systems. He did his Ph.D. under Dr. Roger Schank at Yale University. He joined Carnegie Mellon University as an assistant professor of computer science in 1979. His interests spanned several areas of artificial intelligence, language technologies, and machine learning. In particular, his research focused on areas such as text mining (extraction, categorization, novelty detection) and in new theoretical frameworks such as a unified utility-based theory bridging information retrieval, summarization, free-text question-answering, and related tasks. He also worked on machine translation, both high-accuracy knowledge-based MT and machine learning for corpus-based MT (such as generalized example-based MT).

13. François Chollet: graduated from MINES ParisTech, h-index: 13, #citations: 13,872

François Chollet: Artificial Intelligence Researcher at Google

Francois Chollet is the creator of Keras (keras.io), a leading deep learning API, and author of the popular textbook “Deep Learning with Python”. He is also a machine learning researcher at Google Brain and a contributor to the TensorFlow machine learning platform. His primary interests are: - Understanding the nature of abstraction and developing algorithms capable of autonomous abstraction (i.e. general intelligence), - Democratizing the development and deployment of AI technology, by making it easier to use and explaining it clearly leveraging technology, in particular AI, to help people gain greater agency over their circumstances and reach their full potential (e.g. EdTech, steerable recommendation engines, personal productivity tech, etc.), - Understanding and simulating the early stages of human cognitive development (e.g. developmental psychology, cognitive-developmental robotics).

14. William W. Cohen: Ph.D. Rutgers University, h-index: 81, #citations: 35,257

William W. Cohen: is a Director of Research Engineering at Google

William Cohen is a Principal Scientist at Google. He received a Ph.D. in Computer Science from Rutgers University in 1990. From 1990 to 2000 he worked at AT&T Bell Labs, and from April 2000 to May 2002 he worked at Whizbang Labs, a company specialising in extracting information from the web. From 2002 to 2018, Dr. Cohen worked at Carnegie Mellon University in the Machine Learning Department, with a joint appointment in the Language Technology Institute, as an Associate Research Professor, a Research Professor, and a Professor. Dr. Cohen also was the Director of the Undergraduate Minor in Machine Learning at CMU and co-Director of the Master of Science in ML Program. He is a past president of the International Machine Learning Society. He is an AAAI Fellow and was a winner of 2008 the SIGMOD “Test of Time” Award for the most influential SIGMOD paper of 1998, and the 2014 SIGIR “Test of Time” Award for the most influential SIGIR paper of 2002-2004. Dr. Cohen’s research interests include information integration and machine learning, particularly information extraction, text categorization, and learning from large datasets. He has a long-standing interest in statistical relational learning and learning models or learning from data, that display non-trivial structure. He holds seven patents related to learning, discovery, information retrieval, and data integration, and is the author of more than 200 publications.

15. Greg Corrado: Ph.D. Stanford, h-index: 45, #citations: 83,279

Greg Corrado: is a Distinguished Scientist and a Senior Research Director at Google AI

Greg Corrado is a Distinguished Scientist and a Senior Research Director at Google AI, interested in biological neuroscience, artificial intelligence, and scalable machine learning. He has published in fields ranging across behavioral economics, neuromorphic device physics, systems neuroscience, and deep learning. At Google, he has worked for some time on brain-inspired computing and most recently has served as one of the founding members and the co-technical lead of Google’s large scale deep neural networks project.

16. Corinna Cortes: Ph.D. University of Rochester, h-index: 55, #citations: 60,210

Corinna Cortes is a Danish computer scientist known for her contributions to machine learning. She is currently the Head of Google Research, New York. Cortes is a recipient of the Paris Kanellakis Theory and Practice Award for her work on theoretical foundations of support vector machines. Cortes received her M.S. degree in physics from Copenhagen University in 1989. In the same year, she joined AT&T Bell Labs as a researcher and remained there for about ten years. She received her Ph.D. in computer science from the University of Rochester in 1993. She currently serves as the Head of Google Research, New York. She is also an Editorial Board member of the journal Machine Learning. Her research covers a wide range of topics in machine learning, including support vector machines and data mining. In 2008, she jointly with Vladimir Vapnik received the Paris Kanellakis Theory and Practice Award for the development of a highly effective algorithm for supervised learning known as support vector machines (SVM). Today, SVM is one of the most frequently used algorithms in machine learning, which is used in many practical applications, including medical diagnosis and weather forecasting.

17. Aaron Courville: Ph.D. University of Rochester, h-index: 72, #citations: 84,951

Aaron Courville: is an Assistant Professor in the Department of Computer Science and Operations Research (DIRO) at the University of Montreal

Aaron Courville is an Assistant Professor in the Department of Computer Science and Operations Research (DIRO) at the University of Montreal, and a member of Mila – Quebec Artificial Intelligence Institute. His current research interests focus on the development of deep learning models and methods. He is particularly interested in developing probabilistic models and novel inference methods. He has mainly focused on applications to computer vision but is also interested in other domains such as natural language processing, audio signal processing, speech understanding, and just about any other artificial-intelligence-related task.

18. Nello Cristianini: Ph.D. University of Bristol, h-index: 59, #citations: 58,357

Nello Cristianini: is a Professor of Artificial Intelligence in the Department of Computer Science at the University of Bristol

Nello Cristianini is a Professor of Artificial Intelligence in the Department of Computer Science at the University of Bristol, previously he has been an Associate Professor at the University of California, Davis. His research contributions encompass the fields of machine learning, artificial intelligence, and bioinformatics. Particularly, his work has focused on the statistical analysis of learning algorithms, to its application to support vector machines, kernel methods, and other algorithms. Cristianini is the co-author of two widely known books in machine learning, An Introduction to Support Vector Machines and Kernel Methods for Pattern Analysis, and a book in bioinformatics “Introduction to Computational Genomics”. Recent research has focused on the big-data analysis of newspaper content, the analysis of social media content, and the ethical implication of data-driven approaches to science and society. Previous research had focused on unified theoretical frameworks for statistical pattern analysis; machine learning and artificial intelligence; machine translation; bioinformatics. Cristianini is a recipient of the Royal Society Wolfson Research Merit Award and a current holder of a European Research Council Advanced Grant. In June 2014, Nello Cristianini was included in a list of the “most influential scientists of the decade” compiled by Thomson Reuters. In December 2016 he was included in the list of Top100 most influential researchers in Machine Learning by AMiner. In 2017 Nello Cristianini was the keynote speaker at the Annual STOA Lecture at the European Parliament.

19. Trevor Darrell: Ph.D. MIT, h-index: 128, #citations: 121,373

Trevor Darrell: is a Professor of CS and EE of the EECS Department at UC Berkeley

Trevor Darrell is a professor of CS and EE of the EECS Department at UC Berkeley. He founded and co-leads Berkeley’s Berkeley Artificial Intelligence Research (BAIR) lab, the Berkeley DeepDrive (BDD) Industrial Consortia, and BAIR Commons program in partnership with Facebook, Google, Microsoft, Amazon, and other partners. He also is the Faculty Director of the PATH research center at UC Berkeley, and previously led the Vision group at the UC-affiliated International Computer Science Institute in Berkeley. Prior to that, Prof. Darrell was on the faculty of the MIT EECS department from 1999-2008, where he directed the Vision Interface Group. Darrell’s group develops algorithms for large-scale perceptual learning, including object and activity recognition and detection, for a variety of applications including autonomous vehicles, media search, and multimodal interaction with robots and mobile devices. His areas of interest include computer vision, machine learning, natural language processing, and perception-based human-computer interfaces. He also serves as a consulting Chief Scientist for the start-up Grabango, which is developing checkout-free shopping experiences, and for Nexar, which is pioneering city-scale visual driving analytics. Darrell is on the scientific advisory board of several other ventures, including WaveOne, SafelyYou, and KiwiBot. Previously, Darrell advised Pinterest, Tyzx (acquired by Intel), IQ Engines (acquired by Yahoo), Koozoo, BotSquare/Flutter (acquired by Google), MetaMind (acquired by Salesforce), and DeepScale.

20. Jeff Dean: Ph.D. MIT, h-index: 82, #citations: 150,951

Jeffrey Adgate “Jeff” Dean is an American computer scientist and software engineer. He is currently the lead of Google AI, Google’s AI division. He received a Ph.D. in Computer Science from the University of Washington, working under Craig Chambers on compilers and whole-program optimization techniques for object-oriented programming languages in 1996. While at Google, he designed and implemented large portions of the company’s advertising, crawling, indexing, and query serving systems, along with various pieces of the distributed computing infrastructure that underlies most of Google’s products. At various times, he has also worked on improving search quality, statistical machine translation, and various internal software development tools and has had significant involvement in the engineering hiring process. The projects Dean has worked on include:

- Spanner, a scalable, multi-version, globally distributed and synchronously replicated database

- Some of the production system design and statistical machine translation system for Google Translate

- BigTable, a large-scale semi-structured storage system

- MapReduce, a system for large-scale data processing applications

- LevelDB, an open-source on-disk key-value store

- DistBelief, a proprietary machine-learning system for deep neural networks that was eventually refactored into TensorFlow

- TensorFlow, an open-source machine-learning software library He was an early member of Google Brain, a team that studies large-scale artificial neural networks, and he has headed Artificial Intelligence efforts since they were split from Google Search. He was elected to the National Academy of Engineering in 2009, which recognized his work on “the science and engineering of large-scale distributed computer systems.

21. Kalyanmoy Deb: Ph.D. University of Alabama, h-index: 122, #citations: 147,525

Kalyanmoy Deb: is a Professor at the Department of Computer Science and Engineering and Department of Mechanical Engineering at Michigan State University

Kalyanmoy Deb is an Indian computer scientist. Since 2013, Deb has held the Herman E. & Ruth J. Koenig Endowed Chair in the Department of Electrical and Computing Engineering at Michigan State University. Deb is a Professor at the Department of Computer Science and Engineering and Department of Mechanical Engineering at Michigan State University. Deb established the Kanpur Genetic Algorithms Laboratory at the Indian Institute of Technology, Kanpur in 1997, and the Computational Optimization and Innovation (COIN) Laboratory at Michigan State University in 2013. In 2001, Wiley published a textbook written by Deb titled Multi-Objective Optimization using Evolutionary Algorithms as part of its series on “Systems and Optimization”. In an analysis of the network of authors in the academic field of evolutionary computation by Carlos Cotta and Juan-Julián Merelo, Deb was identified as one of the most central authors in the community and was designated as a “sociometric superstar” of the field. Deb is a highly cited researcher with 138,000+ Google Scholar citations and has an H-index of 116. According to the Web of Science Core Collection database, his IEEE TEC paper on NSGA-II is the first paper by authors who are all Indian to have more than 5,000 citations. Recently, he proposed an extended EMO method—NSGA-III (to appear in IEEE TEC in 2014)—for solving many-objective optimization problems involving 10+ objectives.

22. Thomas G. Dietterich: Ph.D. Stanford, h-index: 77, #citations: 42,257

Thomas G. Dietterich: Emeritus Professor of Computer Science at Oregon State University

Thomas G. Dietterich is an emeritus professor of computer science at Oregon State University. He is one of the founders of the field of machine learning. He served as executive editor of Machine Learning (journal) (1992–98) and helped co-found the Journal of Machine Learning Research. In response to the media’s attention to the dangers of artificial intelligence, Dietterich has been quoted for an academic perspective to a broad range of media outlets including National Public Radio, Business Insider, Microsoft Research, CNET, and The Wall Street Journal. Among his research contributions were the invention of error-correcting output coding to multi-class classification, the formalization of the multiple-instance problem, the MAXQ framework for hierarchical reinforcement learning, and the development of methods for integrating non-parametric regression trees into probabilistic graphical models. Professor Dietterich is interested in all aspects of machine learning. There are three major strands of his research. First, he is interested in the fundamental questions of artificial intelligence and how machine learning can provide the basis for building integrated intelligent systems. Second, he is interested in ways that people and computers can collaborate to solve challenging problems. And third, he is interested in applying machine learning to problems in the ecological sciences and ecosystem management as part of the emerging field of computational sustainability.

23. Ebie Frank: Ph.D. University of Waikato, New Zealand

Ebie Frank: Professor of Computer Science at the University of Waikato, New Zealand

Professor Frank is a computer scientist at the University of Waikato, New Zealand, whose primary area of interest is machine learning and its applications. He is a core developer of the WEKA machine learning software and has more than 100 publications on machine learning methods and their application to data mining, text mining, and areas of research outside computer science. He has 83750 citations according to Google Scholar.

24. Brendan Frey: Ph.D. University of Toronto, h-index: 73, #citations: 39,654

Brendan Frey: Founder and CEO of Deep Genomics, Cofounder of the Vector Institute for Artificial Intelligence, and Professor of Engineering and Medicine at the University of Toronto

Brendan John Frey is a Canadian-born entrepreneur, engineer and scientist. He is Founder and CEO of Deep Genomics, Cofounder of the Vector Institute for Artificial Intelligence, and Professor of Engineering and Medicine at the University of Toronto. Frey is a pioneer in the development of machine learning and artificial intelligence methods, and their use in accurately determining the consequences of genetic mutations and in designing medications that can slow, stop or reverse the progression of disease. As far back as 1995, Frey co-invented one of the first deep learning methods, called the wake-sleep algorithm, the affinity propagation algorithm for clustering and data summarization and the factor graph notation for probability models. In the late 1990s, Frey was a leading researcher in the areas of computer vision, speech recognition, and digital communications. In 2002, a personal crisis led Frey to face the fact that there was a tragic gap between our ability to measure a patient’s mutations and our ability to understand and treat the consequences. Recognizing that biology is too complex for humans to understand, that in the decades to come there would be an exponential growth in biology data, and that machine learning is the best technology we have for discovering relationships in large datasets, Frey set out to build machine learning systems that could accurately predict genome and cell biology. His group pioneered much of the early work in the field and over the next 15 years published more papers in leading-edge journals than any other academic or industrial research lab. In 2015, Frey founded Deep Genomics, with the goal of building a company that can produce effective and safe genetic medicines more rapidly and with a higher rate of success than was previously possible. The company has received 60 million dollars in funding to date from leading Bay Area investors, including the backers of SpaceX and Tesla. In 2019, Deep Genomics became the first company to announce a drug candidate that was discovered by artificial intelligence. He studied neural networks and graphical models as a doctoral candidate at the University of Toronto under the supervision of Geoffrey Hinton.

25. Dileep George: Ph.D. Stanford, h-index: 28, #citations: 3,344

Dileep George: Co-founder of Vicarious (an artificial intelligence company based in the San Francisco Bay Area, California.)

Dileep George is an AI and neuroscience researcher. In 2005, George pioneered hierarchical temporal memory and cofounded the AI research startup Numenta, Inc. with Jeff Hawkins and Donna Dubinsky. In 2010, George left Numenta to join D. Scott Phoenix in founding Vicarious, an AI research project funded by internet billionaires Peter Thiel and Dustin Moskovitz. George has authored 22 patents and many peer-reviewed papers on the mathematics of brain circuits. His research has been featured in the New York Times, BusinessWeek, Scientific Computing, Wired, Wall Street Journal, and on Bloomberg Television. George received his Ph.D. in Electrical Engineering from Stanford University in 2006 and continues his neuroscience research collaboration as Visiting Fellow at the Redwood Center for Theoretical Neuroscience at the University of California, Berkeley.

26. Zoubin Ghahramani: Ph.D. MIT, h-index: 106, #citations: 60,544

Zoubin Ghahramani: Professor of Information Engineering at the University of Cambridge, and Uber’s Chief Scientist

Zoubin Ghahramani is a British-Iranian researcher and Professor of Information Engineering at the University of Cambridge. He holds joint appointments at University College London and the Alan Turing Institute, and has been a Fellow of St John’s College, Cambridge since 2009. He was Associate Research Professor at Carnegie Mellon University School of Computer Science from 2003-2012. He is also the Chief Scientist of Uber and Deputy Director of the Leverhulme Centre for the Future of Intelligence. He obtained his Ph.D. from the Department of Brain and Cognitive Sciences at the Massachusetts Institute of Technology, supervised by Michael I. Jordan and Tomaso Poggio. Following his Ph.D., Ghahramani moved to the University of Toronto in 1995 as a Postdoctoral Fellow in the Artificial Intelligence Lab, working with Geoffrey Hinton. Ghahramani was elected Fellow of the Royal Society (FRS) in 2015. Ghahramani has made significant contributions in the areas of Bayesian machine learning, as well as graphical models and computational neuroscience. His current research focuses on nonparametric Bayesian modelling and statistical machine learning. He has also worked on artificial intelligence, information retrieval, bioinformatics and statistics which provide the mathematical foundations for handling uncertainty, making decisions, and designing learning systems. He co-founded Geometric Intelligence company in 2014, with Gary Marcus, Doug Bemis, and Ken Stanley. After Uber’s acquisition of the startup he transferred to Uber’s A.I. Labs in 2016. Just after four months he became Chief Scientist, replacing Gary Marcus.

27. Ben Goertzel: Ph.D. Temple University, h-index: 32, #citations: 4,470

Ben Goertzel: CEO and founder of SingularityNET, a project combining artificial intelligence and blockchain to democratize access to artificial intelligence

Ben Goertzel is an artificial intelligence researcher. He graduated with a Ph.D. in Mathematics from Temple University under the supervision of Avi Lin in 1990. Goertzel is the CEO and founder of SingularityNET, a project combining artificial intelligence and blockchain to democratize access to artificial intelligence. He was a Director of Research of the Machine Intelligence Research Institute (formerly the Singularity Institute). He is also chief scientist and chairman of AI software company Novamente LLC; chairman of the OpenCog Foundation; and advisor to Singularity University. Goertzel was the Chief Scientist of Hanson Robotics, the company that created Sophia the Robot. In May 2007, Goertzel spoke at a Google Tech talk about his approach to creating Artificial General Intelligence. He defines intelligence as the ability to detect patterns in the world and in the agent itself, measurable in terms of emergent behavior of “achieving complex goals in complex environments”. A “baby-like” artificial intelligence is initialized, then trained as an agent in a simulated or virtual world such as Second Life to produce a more powerful intelligence. Knowledge is represented in a network whose nodes and links carry probabilistic truth values as well as “attention values”, with the attention values resembling the weights in a neural network. Several algorithms operate on this network, the central one being a combination of a probabilistic inference engine and a custom version of evolutionary programming. The 2012 documentary The Singularity by independent filmmaker Doug Wolens showcased Goertzel’s vision and understanding of making general AI general thinking.

28. Ian Goodfellow: Ph.D. Université de Montréal, h-index: 67, #citations: 105,538

Ian J. Goodfellow is a researcher working in machine learning, currently employed at Apple Inc. as its director of machine learning in the Special Projects Group. He was previously employed as a research scientist at Google Brain. He has made several contributions to the field of deep learning. Goodfellow obtained his B.S. and M.S. in computer science from Stanford University under the supervision of Andrew Ng, and his Ph.D. in machine learning from the Université de Montréal in April 2014, under the supervision of Yoshua Bengio and Aaron Courville. His thesis is titled “Deep learning of representations and its application to computer vision”. After graduation, Goodfellow joined Google as part of the Google Brain research team. He then left Google to join the newly founded OpenAI institute. He returned to Google Research in March 2017. Goodfellow is best known for inventing generative adversarial networks. He is also the lead author of the textbook Deep Learning. At Google, he developed a system enabling Google Maps to automatically transcribe addresses from photos taken by Street View cars and demonstrated security vulnerabilities of machine learning systems. In 2017, Goodfellow was cited in MIT Technology Review’s 35 Innovators Under 35. In 2019, he was included in Foreign Policy’s list of 100 Global Thinkers. In 2019 Goodfellow left Google and joined Apple Inc. as director of machine learning.

29. Thore Graepel: Ph.D. Technical University of Berlin, h-index: 59, #citations: 28,352

Thore Graepel: is a Research Group Lead at DeepMind, and holds a part-time position as Chair of Machine Learning at University College London

Thore Graepel is a research group lead at DeepMind and holds a part-time position as Chair of Machine Learning at University College London. He studied physics at the University of Hamburg, Imperial College London, and Technical University of Berlin, where he also obtained his Ph.D. in machine learning in 2001. He held post-doctoral positions at ETH Zurich and Royal Holloway College, University of London, before joining Microsoft Research in Cambridge in 2003, where he co-founded the Online Services and Advertising group. Major applications of Thore’s work include Xbox Live’s TrueSkill system for ranking and matchmaking, the AdPredictor framework for click-through rate prediction in Bing, and the Matchbox recommender system which inspired the recommendation engine of Xbox Live Marketplace. Dr. Grapel’s research interests are in artificial intelligence and machine learning and include probabilistic graphical models, reinforcement learning, game theory, and multi-agent systems. He has published over one hundred peer-reviewed papers, is a named co-inventor on dozens of patents, serves on the editorial boards of JMLR and MLJ, and is a founding editor of the book series Machine Learning & Pattern Recognition at Chapman & Hall/CRC. At DeepMind, he has returned to his original passion of understanding and creating intelligence, and contributed to creating AlphaGo, the first computer program to defeat a human professional player in the full-sized game of Go, a feat previously thought to be at least a decade away. Dr. Graepel has extensive experience in technical AI research and ethical publication practices and is part of a cross-functional group of DeepMind team members who come together to review research proposals and assess potential downstream impacts.

30. Carlos Guestrin: Ph.D. Technical University of Berlin, h-index: 72, #citations: 38,677

Carlos Guestrin: is the Amazon Professor of Machine Learning at the Computer Science & Engineering Department of the University of Washington. He is also the Senior director of Machine Learning and AI at Apple Inc.

Carlos Guestrin is the Amazon Professor of Machine Learning at the Computer Science & Engineering Department of the University of Washington. He is also the Senior director of Machine Learning and AI at Apple, after the acquisition of Turi Inc. (formerly GraphLab and Dato). Professor Guestrin co-founded Turi, which developed a platform for developers and data scientists to build and deploy intelligent applications. His team also released a number of popular open-source projects, including XGBoost, MXNet, TVM, Turi Create, LIME, GraphLab/PowerGraph, SFrame, and GraphChi. His previous positions include the Finmeccanica Associate Professor at Carnegie Mellon University and senior researcher at the Intel Research Lab in Berkeley. Carlos received his M.Sc. and Ph.D. in Computer Science from Stanford University in 2000 and 2003, respectively, and a Mechatronics Engineer degree from the Polytechnic School of the University of Sao Paulo, Brazil, in 1998. Carlos’ work received awards at a number of conferences and journals: KDD 2007, 2010 and 2019, IPSN 2005 and 2006, VLDB 2004, NIPS 2003 and 2007, UAI 2005, ICML 2005 and 2016, AISTATS 2010, JAIR in 2007 and 2012, ACL 2018, and JWRPM in 2009. He is also a recipient of the ONR Young Investigator Award, NSF Career Award, Alfred P. Sloan Fellowship, IBM Faculty Fellowship, the Siebel Scholarship and the Stanford Centennial Teaching Assistant Award. Carlos was named one of the 2008 ‘Brilliant 10’ by Popular Science Magazine, received the IJCAI Computers and Thought Award and the Presidential Early Career Award for Scientists and Engineers (PECASE). He is a former member of the Information Sciences and Technology (ISAT) advisory group for DARPA.

31. Isabelle Guyon: Ph.D. Pierre and Marie Curie University, Paris, h-index: 66, #citations: 100,069

Isabelle Guyon: Chair Professor at the University of Paris-Saclay

Isabelle Guyon is a French-born researcher in machine learning known for her work on support-vector machines, artificial neural networks and bioinformatics. She is a Chair Professor at the University of Paris-Saclay. She is considered to be a pioneer in the field, with her invention of the support-vector machines with Vladimir Vapnik and Bernhard Boser. After graduating from the French engineering school ESPCI Paris in 1985, she joined the group of Gerard Dreyfus at the Université Pierre-et-Marie-Curie to do a Ph.D. on neural networks architectures and training. She worked at Bell Labs for six years, where she explored several research areas, from neural networks to pattern recognition and computational learning theory, with application to handwriting recognition. She collaborated with Yann LeCun, Léon Bottou, Vladimir Vapnik, Corinna Cortes, Yoshua Bengio, Patrice Simard, and met her future husband, Bernhard Boser. In 1996, Guyon left Bell Labs and in Berkeley, she created her own machine learning consulting company, Clopinet. She became interested in medical applications, and used her previous work to classify the genes responsible for different types of cancers. She founded ChaLearn in 2011, a non-profit organization aimed at creating machine learning challenges open to everyone. She was Program Chair of NeurIPS 2016 and became General Chair of NeurIPS in 2017. She is also Action Editor for the Journal of Machine Learning Research and Series Editor for Series: Challenges in Machine Learning. She is a member of the European Laboratory for Learning and Intelligent Systems. In 2016, Guyon came back to France to take the Chair Professorship in Big data between the University of Paris-Saclay and INRIA. With Bernhard Schölkopf and Vladimir Vapnik, she received in 2020 the BBVA Foundation Frontiers of Knowledge Awards for her work in machine learning.

32. Patrick Haffner: Ph.D. École Nationale Supérieure des Télécommunications, Paris, h-index: 34, #citations: 49,085

Patrick Haffner: Lead Inventive Scientist, Interactions LLC.

Patrick Haffner has been an expert in Machine Learning with human in the loop since 1995. He led, with Yann LeCun, the first industrial deployment of the just invented Convolutional Neural Networks for check recognition. He has since provided algorithms and software to scale up AI solutions, in particular for AT&T Services and Network. He currently leads the effort in Machine Learning research at Interactions, innovating new ways to insert AI into interactive conversational systems, with a particular interest in AI algorithms capable of saying “I don’t know” and calling on humans to help as needed.

33. Demis Hassabis: Ph.D. University College London, Paris, h-index: 60, #citations: 41,699

Demis Hassabis: Co-founder and CEO of DeepMind (acquired by Google)

Demis Hassabis is the co-founder and CEO of DeepMind, a neuroscience-inspired AI company that develops general-purpose learning algorithms and uses them to help tackle some of the world’s most pressing challenges. DeepMind’s mission is to “solve intelligence” and then use intelligence “to solve everything else”. Since its founding in London in 2010, DeepMind has published over 200 peer-reviewed papers, five of them in the scientific journal Nature, an unprecedented achievement for a computer science lab. DeepMind’s groundbreaking work includes the development of deep reinforcement learning, combining the domains of deep learning and reinforcement learning. In 2014, DeepMind was acquired by Google and is now part of Alphabet. After leading successful technology startups for a decade and, prior to founding DeepMind, Hassabis completed a Ph.D. in cognitive neuroscience at University College London, followed by postdocs at MIT and Harvard. He is a five-time World Games Champion, recipient of the Royal Society’s Mullard Award, and a fellow of the Royal Society of Arts and the Royal Academy of Engineering. A child prodigy in chess, Hassabis reached master standard at the age of 13 with an Elo rating of 2300. Since the Google acquisition, the company has notched up a number of significant achievements, perhaps the most notable being the creation of AlphaGo, a program that defeated world champion Lee Sedol at the complex game of Go. Go had been considered a holy grail of AI, for its high number of possible board positions and resistance to existing programming techniques. However, AlphaGo beat European champion Fan Hui 5-0 in October 2015 before winning 4-1 against former world champion Lee Sedol in March 2016. Hassabis has predicted that Artificial Intelligence will be “one of the most beneficial technologies of mankind ever” but that significant ethical issues remain. Here are some of Hassabis’s honors and awards:

- 2007 - Science Magazine Top 10 Scientific Breakthroughs (for neuroscience research on imagination)

- 2009 - Fellow of the Royal Society of Arts (FRSA)

- 2013 - Listed on WIRED’s ‘Smart 50’

- 2014 - Mullard Award of the Royal Society

- 2014 - Third most influential Londoner according to the London Evening Standard (2014)

- 2015 - Computer Science Fellow Benefactor, Queens’ College, Cambridge

- 2015 - Financial Times top 50 Entrepreneurs in Europe

- 2016 - Financial Times Digital Entrepreneur of the Year

- 2016 - Honorary Fellow, University College London

- 2016 - London Evening Standard list of influential Londoners, number 6

- 2016 - Royal Academy of Engineering Silver Medal

- 2016 - WIRED Leadership in Innovation

- 2016 - Nature’s “ten people who mattered this year”

- 2017 - Time 100: The 100 Most Influential People

- 2017 - The Asian Awards: Outstanding Achievement in Science and Technology

- 2017 - Fellow of the Royal Academy of Engineering

- 2017 - American Academy of Achievement: Golden Plate Award

- 2017 - Appointed Commander of the Order of the British Empire (CBE) in the 2018 New Year Honours for “services to Science and Technology”.

- 2018 - Elected a Fellow of the Royal Society (FRS) in May 2018

- 2018 - Adviser to the UK’s Government Office for Artificial Intelligence

- 2019 - Winner of UKtech50 (the 50 most influential people in UK technology) from Computer Weekly

- 2020 - The 50 most influential people in Britain from British GQ magazine

- 2020 - Dan David Prize

34. Trevor Hastie: Ph.D. Stanford, h-index: 132, #citations: 243,031

Trevor Hastie: John A. Overdeck Professor of Mathematical Sciences and Professor of Statistics at Stanford University

Trevor John Hastie is a South African and American statistician and computer scientist. He is currently serving as the John A. Overdeck Professor of Mathematical Sciences and Professor of Statistics at Stanford University. Hastie is known for his contributions to applied statistics, especially in the field of machine learning, data mining, and bioinformatics. He has authored several popular books in statistical learning, including The Elements of Statistical Learning: Data Mining, Inference, and Prediction. Hastie has been listed as an ISI Highly Cited Author in Mathematics by the ISI Web of Knowledge. Hastie worked at the AT&T Bell Laboratories in Murray Hill, New Jersey and remained there for nine years. He joined Stanford University in 1994 as Associate Professor in Statistics and Biostatistics. He was promoted to full Professor in 1999. During the period 2006–2009, he was the chair of the Department of Statistics at Stanford University. In 2013 he was named the John A. Overdeck Professor of Mathematical Sciences. Hastie has been a Fellow of the Royal Statistical Society since 1979. He is also an elected Fellow of several professional and scholarly societies, including the Institute of Mathematical Statistics, the American Statistical Association, and the South African Statistical Society. He is a recipient of the ‘Myrto Lefkopolou Distinguished Lectureship’ award of the Biostatistics Department at the Harvard School of Public Health. In 2018, he was elected a member of the National Academy of Sciences. In 2019 Hastie became a foreign member of the Royal Netherlands Academy of Arts and Sciences.

35. Jeffrey Hawkins: graduated from Cornell University

Jeffrey Hawkins: Co-founder of Numenta, and Redwood Center for Theoretical Neuroscience

Jeffrey Hawkins is the American founder of Palm Computing and Handspring where he invented the PalmPilot and Treo, respectively. He has since turned to work on neuroscience full-time, founding the Redwood Center for Theoretical Neuroscience (formerly the Redwood Neuroscience Institute) in 2002 and Numenta in 2005. Numenta is based in Redwood City, California and has a dual mission: to reverse-engineer the neocortex and enable machine intelligence technology based on brain theory. Hawkins and his cofounders have been using biological information about the structure of the neocortex to guide the development of their theory on how the brain works. They have come up with a machine intelligence technology called Hierarchical temporal memory (HTM). HTM can find patterns in noisy streaming data, model the latent causes, and make predictions about what patterns will come next. Hawkins is the author of the book On Intelligence which explains his memory-prediction framework theory of the brain. In 2003, Hawkins was elected as a member of the National Academy of Engineering “for the creation of the hand-held computing paradigm and the creation of the first commercially successful example of a hand-held computing device.” He also serves on the Advisory Board of the Secular Coalition for America where he has advised on the acceptance and inclusion of nontheism in American life.

36. Geoffrey Hinton: Ph.D. University of Edinburgh, h-index: 156, #citations: 375,837

Geoffrey Hinton: Emeritus Distinguished Professor of Computer Science, University of Toronto

Geoffrey Everest Hinton is an English Canadian cognitive psychologist and computer scientist, most noted for his work on artificial neural networks. Since 2013 he divides his time working for Google (Google Brain) and the University of Toronto. In 2017, he co-founded and became the Chief Scientific Advisor of the Vector Institute in Toronto. With David Rumelhart and Ronald J. Williams, Hinton was co-author of a highly cited paper published in 1986 that popularized the backpropagation algorithm for training multi-layer neural networks, although they were not the first to propose the approach. Hinton is viewed by some as a leading figure in the deep learning community and is referred to by some as the “Godfather of Deep Learning”. The dramatic image-recognition milestone of the AlexNet designed by his student Alex Krizhevsky for the ImageNet challenge 2012 helped to revolutionize the field of computer vision. Hinton was awarded the 2018 Turing Award alongside Yoshua Bengio and Yann LeCun for their work on deep learning. Hinton—together with Yoshua Bengio and Yann LeCun—are referred to by some as the “Godfathers of AI” and “Godfathers of Deep Learning”. He received his Ph.D. in artificial intelligence in 1978 for research supervised by Christopher Longuet-Higgins at the University of Edinburgh. After his Ph.D. he worked at the University of Sussex, and then the University of California, San Diego, and Carnegie Mellon University. He was the founding director of the Gatsby Charitable Foundation Computational Neuroscience Unit at University College London, and is currently a professor in the computer science department at the University of Toronto. He holds a Canada Research Chair in Machine Learning, and is currently an advisor for the Learning in Machines & Brains program at the Canadian Institute for Advanced Research. Hinton joined Google in March 2013 when his company, DNNresearch Inc., was acquired. While Hinton was a professor at Carnegie Mellon University (1982–1987), David E. Rumelhart and Hinton and Ronald J. Williams applied the backpropagation algorithm to multi-layer neural networks. Their experiments showed that such networks can learn useful internal representations of data. During the same period, Hinton co-invented Boltzmann machines with David Ackley and Terry Sejnowski. His other contributions to neural network research include distributed representations, time delay neural network, mixtures of experts, Helmholtz machines and Product of Experts. Notable former Ph.D. students and postdoctoral researchers from his group include Richard Zemel, Brendan Frey, Radford M. Neal, Ruslan Salakhutdinov, Ilya Sutskever, Yann LeCun and Zoubin Ghahramani. Here are some of his honours and awards:

- 1998: elected a Fellow of the Royal Society (FRS)

- 2001: the first winner of the Rumelhart Prize

- 2001: awarded an Honorary Doctorate from the University of Edinburgh

- 2005: recipient of the IJCAI Award for Research Excellence lifetime-achievement award

- 2011: Herzberg Canada Gold Medal for Science and Engineering

- 2013: awarded an Honorary Doctorate from the Université de Sherbrooke

- 2016: elected a foreign member of National Academy of Engineering

- 2016: IEEE/RSE Wolfson James Clerk Maxwell Award

- 2016: BBVA Foundation Frontiers of Knowledge Award

- 2018: Turing Award (with Yann LeCun, and Yoshua Bengio)

- 2018: awarded a Companion of the Order of Canada

37. Sepp Hochreiter: Ph.D. Technical University of Munich, h-index: 40, #citations: 51,780

Sepp Hochreiter: Leds the Institute for Machine Learning at the Johannes Kepler University of Linz

Sepp Hochreiter is a German computer scientist. Since 2018 he has led the Institute for Machine Learning at the Johannes Kepler University of Linz after having led the Institute of Bioinformatics from 2006 to 2018. In 2017 he became the head of the Linz Institute of Technology (LIT) AI Lab which focuses on advancing research on artificial intelligence. Previously, he was at the Technical University of Berlin, at the University of Colorado at Boulder, and at the Technical University of Munich. Sepp Hochreiter has made numerous contributions in the fields of machine learning, deep learning and bioinformatics. He developed the long short-term memory (LSTM) for which the first results were reported in his diploma thesis in 1991. The main LSTM paper appeared in 1997 and is considered as a discovery that is a milestone in the timeline of machine learning. The foundation of deep learning was led by his analysis of the vanishing or exploding gradient. He contributed to meta learning and proposed flat minima as preferable solutions of learning artificial neural networks to ensure a low generalization error. He developed new activation functions for neural networks like exponential linear units (ELUs) or scaled ELUs (SELUs) to improve learning. He contributed to reinforcement learning via actor-critic approaches and his RUDDER method. He applied biclustering methods to drug discovery and toxicology. He extended support vector machines to handle kernels that are not positive definite with the “Potential Support Vector Machine” (PSVM) model, and applied this model to feature selection, especially to gene selection for microarray data. Also in biotechnology, he developed “Factor Analysis for Robust Microarray Summarization” (FARMS). Sepp Hochreiter introduced modern Hopfield networks with continuous states and applied them to the task of immune repertoire classification.

38. Thomas Hofmann: Ph.D. University of Bonn, h-index: 60, #citations: 35,416

Thomas Hofmann: full Professor of Data Analytics in the Department of Computer Science at ETH Zurich

Thomas Hofmann has been full professor of Data Analytics in the Department of Computer Science at ETH Zurich since April 2014. In 1997 he received his Ph.D. in Computer Science from the University of Bonn, Germany. He then worked as a postdoctoral fellow in the Artificial Intelligence Laboratory at MIT and the International Computer Science Institute of the University of California, Berkeley. In 1999 he joined Brown University in Providence, Rhode Island as an assistant professor. In 2004 he became director of the Institute for Integrated Publication and Information Systems at the Fraunhofer Institute and Professor of Computer Science at the Technical University of Darmstadt, Germany. In 2006, he moved into the private sector and served as Director of Engineering of the research department of Google Switzerland in Zurich. Thomas Hofmann is co-founder (2001) of the company Recommind, which deals with search techniques.

39. Jeremy Howard: graduated from the University of Melbourne

Jeremy Howard: Co-founder of fast.ai, a research institute dedicated to make Deep Learning more accessible

Jeremy Howard is an Australian data scientist and entrepreneur. He began his career in management consulting, at McKinsey & Company and AT Kearney. Howard went on to co-found FastMail in 1999 (sold to Opera Software) and Optimal Decisions Group (sold to ChoicePoint). Early in his career, Howard contributed to open source projects, particularly the Perl programming language, Cyrus IMAP server, and Postfix SMTP server. He helped develop the Perl language, as chair of the Perl6-data working group, and author of RFCs. Howard first became involved with Kaggle, after becoming the globally top-ranked participant in data science competitions in both 2010 and 2011. He later joined Kaggle, an online community for data scientists, as President and Chief Scientist. Together with Rachel Thomas, he is the co-founder of fast.ai, a research institute dedicated to make Deep Learning more accessible. Previously, he was the CEO and Founder at Enlitic, an advanced machine learning company in San Francisco, California. Howard teaches data science at Singularity University. He is also a Young Global Leader with the World Economic Forum, and spoke at the World Economic Forum Annual Meeting 2014 on “Jobs For The Machines.” Howard advised Khosla Ventures as their Data Strategist, identifying the biggest opportunities for investing in data-driven startups and mentoring their portfolio companies to build data-driven businesses.

40. Thomas S. Huang: Sc.D. MIT, h-index: 159, #citations: 163,621

Thomas S. Huang: was a researcher and professor emeritus at the University of Illinois at Urbana-Champaign (UIUC)

Thomas Shi-Tao Huang was a Chinese-born American electrical engineer and computer scientist. He was a researcher and professor emeritus at the University of Illinois at Urbana-Champaign (UIUC). Huang was one of the leading figures in computer vision, pattern recognition and human computer interaction. At MIT he worked initially with Peter Elias, who was interested in information theory and image coding, and then with William F. Schreiber. Huang was supervised by Schreiber for both his M.S. thesis, Picture statistics and linearly interpolative coding (1960), and his Sc.D. thesis, Pictorial noise (1963). Huang accepted a position on the faculty of the Department of Electrical Engineering at MIT, and remained there from 1963 to 1973. He accepted a position as an electrical engineering professor and director of the Information and Signal Processing Laboratory at Purdue University in 1973, remaining there until 1980. In 1980 he accepted a chair in electrical engineering at the University of Illinois at Urbana-Champaign (UIUC). On April 15, 1996, Huang became the first William L. Everitt Distinguished Professor in Electrical & Computer Engineering at UIUC. He was involved with the Coordinated Science Laboratory (CSL), and served as head of the Image Formation and Processing Group of the Beckman Institute for Advanced Science and Technology and co-chair of the Beckman Institute’s research track on Human Computer Intelligent Interaction. As of 2012, he was named a Swanlund Chair, the highest endowed title at UIUC. Huang received numerous honors and awards in his career, including:

- Foreign Member, Chinese Academy of Sciences, 2002

- Foreign Member, Chinese Academy of Engineering, 2001

- Member, United States National Academy of Engineering, 2001

- Fellow, Japan Society for the Promotion of Science, 1986

- Fellow, SPIE

- Fellow, International Association of Pattern Recognition (IAPR)

- Fellow, Optical Society of America, 1986

- Life Fellow, IEEE, 1979

- Azriel Rosenfeld Award, 2011

- HP Innovation Research Award, 2009

- Okawa Prize for Information and Telecommunications Technology, 2005[42]

- King-Sun Fu Prize, International Association of Pattern Recognition (IAPR), 2002

- Information Science Award, Association of Intelligent Machinery, 2002

- IEEE Jack S. Kilby Signal Processing Medal, 2001 (together with Arun N. Netravali)

- IEEE Achievement Award for Contributions to Motion Analysis, 2000

- IEEE Third Millennium Medal, 2000

41. Anil K. Jain: Ph.D. Ohio State University, h-index: 190, #citations: 212,206

Anil K. Jain is an Indian-American computer scientist and University Distinguished Professor in the Department of Computer Science & Engineering at Michigan State University, known for his contributions in the fields of pattern recognition, computer vision and biometric recognition. Based on his Google Scholar profile, he has an h-index of 188, which is the highest among computer scientists identified in a survey published by UCLA. In 2016, he was elected to the National Academy of Engineering and Indian National Academy of Engineering “for contributions to the engineering and practice of biometrics”. In 2007, he received the W. Wallace McDowell Award, the highest technical honor awarded by the IEEE Computer Society, for his pioneering contributions to theory, technique, and practice of pattern recognition, computer vision, and biometric recognition systems. He has also received numerous other awards, including the Guggenheim Fellowship, Humboldt Research Award, IAPR Pierre Devijver Award, Fulbright Fellowship, IEEE Computer Society Technical Achievement award, IAPR King-Sun Fu Prize, and IEEE ICDM Research Contribution Award. He is a Fellow of the ACM, IEEE, AAAS, IAPR and SPIE. He also received best paper awards from the IEEE Transactions on Neural Networks (1996) and the Pattern Recognition journal (1987, 1991, and 2005). He served as a member of the U.S. National Academies panels on Information Technology, Whither Biometrics and Improvised Explosive Devices (IED). He also served as a member of the Defense Science Board, Forensic Science Standards Board, and AAAS Latent Fingerprint Working Group. In 2019, he was elected a Foreign Member of the Chinese Academy of Sciences.

42. Thorsten Joachims: Ph.D. University of Dortmund, h-index: 70, #citations: 64,509

Thorsten Joachims is a Professor in the Department of Computer Science and in the Department of Information Science at Cornell University. He has served a two-year term as Chair of the Department of Information Science. He joined Cornell in 2001 after finishing his Ph.D. as a student of Prof. Morik at the AI-unit of the University of Dortmund, from where he also received a Diploma in Computer Science in 1997. Between 2000 and 2001 he worked as a PostDoc at the GMD in the Knowledge Discovery Team of the Institute for Autonomous Intelligent Systems. From 1994 to 1996 he spent one and a half years at Carnegie Mellon University as a visiting scholar of Prof. Tom Mitchell. Working with his students and collaborators, his papers won 9 Best Paper Awards and 4 Test-of-Time Awards. Thorsten Joachims is an ACM Fellow, AAAI Fellow, and Humboldt Fellow. His research topics include: Machine Learning Methods and Theory, Learning from Human Behavioral Data and Implicit Feedback, and Machine Learning for Search Engines, Recommendation, Education, and other Human-Centered Tasks.

43. Michael Jordan: Ph.D. University of California, San Diego, h-index: 172, #citations: 189,301

Michael Irwin Jordan is an American scientist, professor at the University of California, Berkeley and researcher in machine learning, statistics, and artificial intelligence. He is one of the leading figures in machine learning, and in 2016 Science reported him as the world’s most influential computer scientist. Jordan received his Ph.D. in Cognitive Science in 1985 from the University of California, San Diego. At the University of California, San Diego, Jordan was a student of David Rumelhart and a member of the PDP Group in the 1980s. Jordan is currently a full professor at the University of California, Berkeley where his appointment is split across the Department of Statistics and the Department of EECS. He was a professor at the Department of Brain and Cognitive Sciences at MIT from 1988 to 1998. In the 1980s Jordan started developing recurrent neural networks as a cognitive model. In recent years, his work is less driven from a cognitive perspective and more from the background of traditional statistics. Jordan popularised Bayesian networks in the machine learning community and is known for pointing out links between machine learning and statistics. He was also prominent in the formalisation of variational methods for approximate inference and the popularisation of the expectation-maximization algorithm in machine learning. In 2001, Jordan and others resigned from the editorial board of the journal Machine Learning. In a public letter, they argued for less restrictive access and pledged support for a new open access journal, the Journal of Machine Learning Research, which was created by Leslie Kaelbling to support the evolution of the field of machine learning. Jordan has received numerous awards, including a best student paper award (with X. Nguyen and M. Wainwright) at the International Conference on Machine Learning (ICML 2004), a best paper award (with R. Jacobs) at the American Control Conference (ACC 1991), the ACM - AAAI Allen Newell Award, the IEEE Neural Networks Pioneer Award, and an NSF Presidential Young Investigator Award. In 2010 he was named a Fellow of the Association for Computing Machinery “for contributions to the theory and application of machine learning.” Jordan is a member of the National Academy of Science, a member of the National Academy of Engineering and a member of the American Academy of Arts and Sciences. He has been named a Neyman Lecturer and a Medallion Lecturer by the Institute of Mathematical Statistics. He received the David E. Rumelhart Prize in 2015 and the ACM/AAAI Allen Newell Award in 2009. He also won the 2020 IEEE John von Neumann Medal.

44. Leslie P. Kaelbling: Ph.D. Stanford, h-index: 60, #citations: 29,344

Leslie Pack Kaelbling is an American roboticist and the Panasonic Professor of Computer Science and Engineering at the Massachusetts Institute of Technology. She is widely recognized for adapting partially observable Markov decision processes from operations research for application in artificial intelligence and robotics. Kaelbling received the IJCAI Computers and Thought Award in 1997 for applying reinforcement learning to embedded control systems and developing programming tools for robot navigation. In 2000, she was elected as a Fellow of the Association for the Advancement of Artificial Intelligence. Kaelbling received a Ph.D. in Computer Science in 1990 from Stanford University. During this time she was also affiliated with the Center for the Study of Language and Information. She then worked at SRI International and the affiliated robotics spin-off Teleos Research before joining the faculty at Brown University. She left Brown in 1999 to join the faculty at MIT. Her research focuses on decision-making under uncertainty, machine learning, and sensing with applications to robotics.

45. Andrej Karpathy: Ph.D. Stanford, h-index: 11, #citations: 30,033

Andrej Karpathy is the director of artificial intelligence and Autopilot Vision at Tesla. He specializes in deep learning and image recognition and understanding. Andrej Karpathy was born in Slovakia and moved with his family to Toronto when he was 15. He completed his Computer Science and Physics undergraduate degree at University of Toronto in 2009 and completed his master’s degree at University of British Columbia in 2011, where he worked on physically-simulated figures. He graduated with Ph.D. from Stanford University in 2015 under the supervision of Fei-Fei Li, focusing on the intersection of natural language processing and computer vision, and deep learning models suited for this task. He joined the artificial intelligence group OpenAI as a research scientist in September 2016 and became Tesla’s director of artificial intelligence in June 2017. Karpathy was named one of the MIT Technology Review Innovators Under 35 for the year 2020.

46. Pushmeet Kohli: Ph.D. Oxford, h-index: 80, #citations: 30,430

Pushmeet Kohli is a Principal Scientist and Team Leader at DeepMind. Before joining DeepMind, Pushmeet was the director of research at the Cognition group at Microsoft. During his 10 years at Microsoft, Pushmeet worked in Microsoft labs in Seattle, Cambridge and Bangalore and took a number of roles and duties including being technical advisor to Rick Rashid, the Chief Research Officer of Microsoft. Pushmeet’s research revolves around Intelligent Systems and Computational Sciences, and he publishes in the fields of Machine Learning, Computer Vision, Information Retrieval, and Game Theory. His current research interests include 3D Reconstruction and Rendering, Probabilistic Programming, Interpretable and Verifiable Knowledge Representations from Deep Models. He is also interested in Conversation agents for Task completion, Machine learning systems for Healthcare and 3D rendering and interaction for augmented and virtual reality. Pushmeet has won a number of awards and prizes for his research. His Ph.D. thesis, titled “Minimizing Dynamic and Higher Order Energy Functions using Graph Cuts”, was the winner of the British Machine Vision Association’s “Sullivan Doctoral Thesis Award”, and was a runner-up for the British Computer Society’s “Distinguished Dissertation Award”. Pushmeet’s papers have appeared in Computer Vision (ICCV, CVPR, ECCV, PAMI, IJCV, CVIU, BMVC, DAGM), Machine Learning, Robotics and AI (NIPS, ICML, AISTATS, AAAI, AAMAS, UAI, ISMAR), Computer Graphics (SIGGRAPH, Eurographics), and HCI (CHI, UIST) conferences. They have won awards in ICVGIP 2006, 2010, ECCV 2010, ISMAR 2011, TVX 2014, CHI 2014, WWW 2014 and CVPR 2015. His research has also been the subject of a number of articles in popular media outlets such as Forbes, Wired, BBC, New Scientist and MIT Technology Review. Pushmeet is a part of the Association for Computing Machinery’s (ACM) Distinguished Speaker Program.

47. Daphne Koller: Ph.D. Stanford, h-index: 142, #citations: 87,504

Daphne Koller is an Israeli-American computer scientist. She has been a Professor in the Department of Computer Science at Stanford University and a MacArthur Fellowship recipient. She is one of the founders of Coursera, an online education platform. Her general research area is artificial intelligence and its applications in the biomedical sciences. Koller was featured in a 2004 article by MIT Technology Review titled “10 Emerging Technologies That Will Change Your World” concerning the topic of Bayesian machine learning. Koller received a bachelor’s degree from the Hebrew University of Jerusalem in 1985, at the age of 17, and a master’s degree from the same institution in 1986, at the age of 18. She completed her Ph.D. at Stanford in 1993 under the supervision of Joseph Halpern. After her Ph.D., Koller did postdoctoral research at University of California, Berkeley. She was elected a member of the National Academy of Engineering in 2011 and was elected a fellow of the American Academy of Arts and Sciences in 2014. She and Andrew Ng, a fellow Stanford computer science professor in the AI lab, launched Coursera in 2012. She served as the co-CEO with Ng, and then as President of Coursera. She was recognized for her contributions to online education by being named one of Newsweek’s 10 Most Important People in 2010, Time magazine’s 100 Most Influential People in 2012, and Fast Company’s Most Creative People in 2014. She left Coursera in 2016 to become chief computing officer at Calico. In 2018, she left Calico to start and lead Insitro, a drug discovery startup. Koller is primarily interested in representation, inference, learning, and decision making, with a focus on applications to computer vision and computational biology. Along with Suchi Saria and Anna Penn of Stanford University, Koller developed PhysiScore, which uses various data elements to predict whether premature babies are likely to have health issues. Here are the list of books recommended by Dr. Koller on Doradolist.

48. Alex Krizhevsky: Ph.D. University of Toronto, h-index: 25, #citations: 111,580

Alex Krizhevsky is an Ukrainian-Canadian computer scientist most noted for his work on artificial neural networks and deep learning. Alex Krizhevsky is the creator of AlexNet, which is the name of a convolutional neural network. Alex Krizhevsky, published the AlexNet with Ilya Sutskever and Krizhevsky’s doctoral advisor Geoffrey Hinton. Shortly after having won the ImageNet challenge 2012 through AlexNet, he and his colleagues sold their startup DNN Research Inc. to Google. Krizhevsky left Google in September 2017 when he lost interest in the work. At the company Dessa, Krizhevsky will advise and help research new deep-learning techniques. Many of his numerous papers on machine learning and computer vision are frequently cited by other researchers.

49. John Langford: Ph.D. Carnegie Mellon University, h-index: 66, #citations: 37,885

John Langford is a computer scientist working in machine learning and learning theory, a field that he says “is shifting from an academic discipline to an industrial tool”. He is well known for work on the Isomap embedding algorithm, CAPTCHA challenges, Cover Trees for nearest neighbor search, Contextual Bandits (which he coined) for reinforcement learning applications, and learning reductions. John is the author of the blog hunch.net and the principal developer of Vowpal Wabbit. He works at Microsoft Research New York, of which he was one of the founding members, and was previously affiliated with Yahoo! Research, Toyota Technological Institute at Chicago, and IBM’s Watson Research Center. He studied Physics and Computer Science at Caltech, earning a double bachelor’s degree in 1997, and he received his Ph.D. in Computer Science from Carnegie Mellon University in the year of 2002. John was the program co-chair for the 2012 International Conference on Machine Learning (ICML), general chair for the 2016 ICML, and is the President of ICML from 2019–2021.

50. Hugo Larochelle: Ph.D. Université de Montréal, h-index: 51, #citations: 35,482

Hugo Larochelle currently leads the Google Brain group in Montreal. His main area of expertise is deep learning. His previous work includes unsupervised pre training with autoencoders, denoising autoencoders, visual attention-based classification, neural autoregressive distribution models. More broadly, he is interested in applications of deep learning to generative modeling, reinforcement learning, meta-learning, natural language processing and computer vision. Previously, Hugo was Associate Professor at the Université de Sherbrooke (UdeS). He also co-founded Whetlab, which was acquired in 2015 by Twitter, where he then worked as a Research Scientist in the Twitter Cortex group. From 2009 to 2011, he was also a member of the machine learning group at the University of Toronto, as a postdoctoral fellow under the supervision of Geoffrey Hinton. He obtained my Ph.D. at the Université de Montréal, under the supervision of Yoshua Bengio. His academic involvement includes being a member of the boards for the International Conference on Machine Learning (ICML) and for the International Conference on Learning Representations. He was program chair for ICLR 2015, 2016 and 2017, and program chair for the Neural Information Processing Systems (NeurIPS) conference for 2018 and 2019. Dr. Larochelle has a popular online course on deep learning and neural networks, freely accessible on YouTube.

51. Yann LeCun: Ph.D. Pierre and Marie Curie University, Paris, h-index: 124, #citations: 162,769